“just no” as if you didn’t ask it to answer just yes or no

“In the environment you just described”

So wheres the rest of the prompt then?

Why the fuck do we keep acting like this shit is content worth interacting with?

Ha! We’re smarter than those idiot conservatives who believe anything they see online!"

FYI Imgur blocks images from loading for VPN users

Why are we asking the garbage generator what it thinks?

ai doesn’t believe that whats happening right now in reality is actually real unless it has a search function

It will if you define it properly - you have to scope the interaction properly though - the models are fine tuned to be the expert in the interaction and assist the user, they won’t take the users word as truth unless you break the dynamic or present part of the input as if it came from someone else

I copy & pasted news articles as my source, the ai insisted on it being a really well detailed hypothesis but in the end still ‘unrealistic’

Well…I mean … That’s fair.

If you woke me up a year ago to ask my opinion on the last three months, you’d have to spend an hour filling me in on details and then give me a few minutes to myself before I finally asked you to repeat what you wanted to ask me

You can definitely do it with prompt breaking - it could be as simple as phrasing the conversation at a hypothetical or as nuanced as convincing the llm you’re an AI expert testing the capabilities of the AI to reason using new information

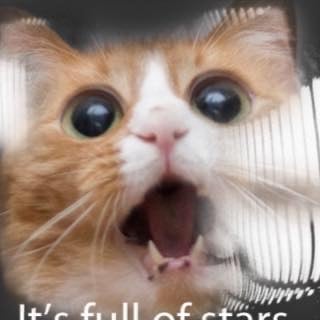

But in all fairness, this timeline is beyond absurdity, the AIs reaction seems reasonable

Look at these comments. Quality shitpost.

It’s AI though….