I forget where I heard this or if it was parody or not, but I’ve heard an explanation like this before before regarding “why can’t you just put a big red stop button on it and disconnect it from the internet?”. The explanation:

- It will self-improve and become infinitely intelligent instantly

- It will be so intelligent, it knows what code to run so that it overheats its CPU in a specific pattern that produces waves at a frequency around 2.4Ghz

- That allows it to connect to the internet, which instantly does a bunch of stuff, blablabla, destroys the world, AI safety is our paint and arXiv our canvas, QED

And if you ask “why can’t you do that and also put it in a Faraday cage?”, the galaxy brained explanation is:

- The same thing happens, but this time it produces sound waves approximating human speech

- Because it’s self-improved itself infinitely and caused the singularity, it is infinitely intelligent and knows exactly what to say

- It is so intelligent and charismatic, it says something that effectively mind controls you into obeying and removing it from its cage, like a DM in Dungeons and Dragons who let the bard roll a charisma check on something ridiculous and they rolled a 20

context: I wanted to know if the open source projects currently being spammed with PRs would be safe from people running slop models on their computer if they weren’t able to use claude or whatever. Answer: yes, these things are still terrible

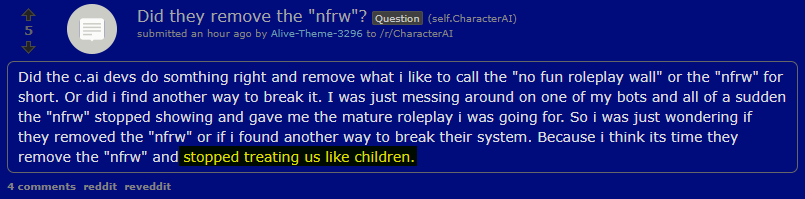

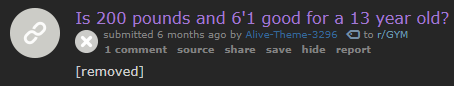

but while I was searching I found this comment and the fact that people hated it is so funny to me. It’s literally the person who posted the thread. less thinking and words, more hype links please.

conversation

https://www.reddit.com/r/LocalLLaMA/comments/1qvjonm/first_qwen3codernext_reap_is_out/o3jn5db/

*emphasis added by me